Design multi-cloud DNS architecture with Azure Private DNS Resolver

When you design your network architecture, it's essential to think about DNS. We must decide whether network resources are publicly available or only private within our network. Public resources like websites, APIs, or other endpoints require an authoritative DNS server to resolve a domain name. A domain name can also be used for our internal network resources. This is more user-friendly than using an IP address. Inside the network, we can use any domain we like. The DNS server is responsible for resolving those domains to IP addresses. For example, a user that connects to the network using VPN would like to use a domain name instead of an IP address. The same applies to a multi-cloud environment. It's very error-prone to use IP addresses because those can change. In this article, I'll start by explaining the basics of DNS. How the Private DNS Resolver replaces a custom DNS forwarder in Azure. Lastly, I'll explain how to design a multi-cloud DNS solution with AWS Route53 Resolver and Azure's Private DNS Resolver.

What is DNS?

DNS is a hierarchical and distributed naming system that translates human-readable domain names into IP addresses. Each resource on the network or internet is identified by a unique IP address. IP addresses allow communication between devices on the network. An example of the microsoft.com domain.

microsoft.com = 20.53.203.50

DNS records

The building blocks of the DNS system are records. There are several record types available. The most important ones for internet traffic are the A, CNAME, and NS records.

| A | Maps a host name to an IP address. www.example.com > 20.10.10.1. |

| CNAME | Maps a host name to another hostname. For example: example.com > www.example.com. |

| NS | Used to delegate domains or sub domains to another set of name servers. |

DNS name servers

The DNS hierarchy includes multiple levels. Each DNS server operates at one of the levels and is responsible for resolving part of the domain. The resolver acts as the interface between the client (users) and the DNS server. This server sends queries to name servers on behalf of the client. It starts with requesting at the root level and then down until it reaches the actual hostname. Let's take the following fully qualified domain name as an example: api.staging.example.com.

| Root-Level domain | The Highest level in the DNS architecture. | Twelve organizations run a root server—for example, the University of Maryland. | . |

| Top-Level domain | Top-level domains (TLDs) are categorized into two groups: organizational and geographic hierarchy—examples: com, edu, nl, uk etc. | The TLD name servers are responsible for resolving the top-level part of the domain. There are many TLD servers. | com |

| Second-Level domain | The Second-level domain is usually the domain's main part and is directly below the top-level domain. | The Authoritative servers are responsible for resolving the main and subdomains. Sometimes the server delegates the resolver to another name server. | example |

| Third-Level domain (sub domains) | The Third-level domain is part of the main domain (second-level domain) and represents a section or a department. | Authoritative servers | staging |

| Hostname | The host part of the FQDN. | Authoritative servers. The label that indicates the server on a network. | api |

The root server returns the servers that resolve the top-level domain. The TDL server returns a list of authoritative servers that resolve the second-level domain. And so forth. Let's see that in action by using the dig command.

dig +trace learn.microsoft.com

; <<>> DiG 9.16.1-Ubuntu <<>> +trace learn.microsoft.com

;; global options: +cmd

. 81752 IN NS f.root-servers.net.

. 81752 IN NS c.root-servers.net.

. 81752 IN NS g.root-servers.net.

. 81752 IN NS l.root-servers.net.

. 81752 IN NS j.root-servers.net.

. 81752 IN NS b.root-servers.net.

. 81752 IN NS i.root-servers.net.

. 81752 IN NS m.root-servers.net.

. 81752 IN NS e.root-servers.net.

. 81752 IN NS k.root-servers.net.

. 81752 IN NS h.root-servers.net.

. 81752 IN NS d.root-servers.net.

. 81752 IN NS a.root-servers.net.

. 81752 IN RRSIG NS 8 0 518400 20230215060000 20230202050000 951 . shgCtuO0XufPswkzQkwltbwHBi4HlRtWdCGcMAS68gBzwmiEcpL2irkl ZpKY73M0vDj7Bm6Eagwp18u3FtHNDjcp2pQRXSz6dNeQAYYPsb38xrGU 3SmCSY6MYchSNX/Jc5+f49woVaErv1zhV5ZnHkjOMDY3LGjQ0V4dR2iT f

k+SEydfBZDlsvkQIGv7R1Q2Gh4EtGoOu0awOKir1XD4cDHpfce6kBax OB2DQaom4J6UsHUfd+jQlQ6U/Ro7EkX9LYDHGvwq3qpTvSs0IQNg4jwu k13EtAq/F7pTecqBb/AzEbTxu3HgTUIUoWgBfQ1ICYPQAWwFzqrFvILd YXgiRQ==

;; Received 525 bytes from 172.25.128.1#53(172.25.128.1) in 869 ms

com. 172800 IN NS k.gtld-servers.net.

com. 172800 IN NS d.gtld-servers.net.

com. 172800 IN NS f.gtld-servers.net.

com. 172800 IN NS l.gtld-servers.net.

com. 172800 IN NS j.gtld-servers.net.

com. 172800 IN NS h.gtld-servers.net.

com. 172800 IN NS c.gtld-servers.net.

com. 172800 IN NS e.gtld-servers.net.

com. 172800 IN NS b.gtld-servers.net.

com. 172800 IN NS i.gtld-servers.net.

com. 172800 IN NS m.gtld-servers.net.

com. 172800 IN NS g.gtld-servers.net.

com. 172800 IN NS a.gtld-servers.net.

com. 86400 IN DS 30909 8 2 E2D3C916F6DEEAC73294E8268FB5885044A833FC5459588F4A9184CF C41A5766

com. 86400 IN RRSIG DS 8 1 86400 20230215060000 20230202050000 951 . eeX+SIe/mrEDaX+91XtQusdd0RPqPcDZubR7ChRGkR4za67c1Ax5mhpo 07KpYnmMpg4pS/mmRMLl5dbi5j9kwvkoKYw0gx8xz6Y173/qYhXm5ihf bitvV8ueuq5bbnHmAwLdf8QLj4xY92X0mbhg6UNUKCRbIQNXQsLmXqHQ ax

2SPfi98Dt8/cBCnFjfk16jrekERSXJlIliampc/KljHHYMsaZBwpnh 1mxAIYwQ7xDlvPVUzQfhBTZ8VC/ZBZ4VNldCYkhn/e9eBEOReUl8Zm/G QXYhlzQ2lV44rKEwlDH9EDwbc6jV6YLqzs6MJ9ZJfmcRSzlK235X3rkl mCAQUQ==

;; Received 1210 bytes from 192.112.36.4#53(g.root-servers.net) in 79 ms

microsoft.com. 172800 IN NS ns1-39.azure-dns.com.

microsoft.com. 172800 IN NS ns2-39.azure-dns.net.

microsoft.com. 172800 IN NS ns3-39.azure-dns.org.

microsoft.com. 172800 IN NS ns4-39.azure-dns.info.

CK0POJMG874LJREF7EFN8430QVIT8BSM.com. 86400 IN NSEC3 1 1 0 - CK0Q2D6NI4I7EQH8NA30NS61O48UL8G5 NS SOA RRSIG DNSKEY NSEC3PARAM

CK0POJMG874LJREF7EFN8430QVIT8BSM.com. 86400 IN RRSIG NSEC3 8 2 86400 20230207052257 20230131041257 36739 com. bIC3JRS3i5tgfH6Kn2lqyHxTgaWT5HPky5puq3ue83uT+ahEG6nNnuR8 ydUphSSrvIYJBJ1ny0zEG1AG/4NLRiaULJzu4TCW7i6Nh5uD/7x+n1Jp v87utrvOtBslzssHr4rBX/tyi/k8dzDl4DwWdeaVdY4aTi

P++q/ePyOI sYiaflykYTR9YJVvL4AyoXClBFGMW9MUWLv6DtMNl2w8Lg==

TCQ78V56RPB9M9CO6K6FI9UOGRT276QB.com. 86400 IN NSEC3 1 1 0 - TCQ7CFRP8QG6FN02PJIBJRN72UVR8TC1 NS DS RRSIG

TCQ78V56RPB9M9CO6K6FI9UOGRT276QB.com. 86400 IN RRSIG NSEC3 8 2 86400 20230207060610 20230131045610 36739 com. KrfiVRFq7HGyXtUeJhOvwsJPU0eMjbQUOzXPWsIjxVajaExEcajsOJhT 0dZBzTZmZNbt3m40Uha1Jc6/8rEeBvJbkTWfAAKnfu4P9Tjl/JRq9fae hoDMU15KxLkpAZCku+WEnkJhcrlzvv7ddXbytJtCcOh3xe

pRbfJnF5aZ Tsn3B5SYatUNK5xb/7PUQDbL4eouBjjTpparqhVpxibFOQ==

;; Received 747 bytes from 192.54.112.30#53(h.gtld-servers.net) in 19 ms

learn.microsoft.com. 3600 IN CNAME learn-public.trafficmanager.net.

;; Received 93 bytes from 13.107.206.39#53(ns4-39.azure-dns.info) in 10 ms

In the response, you can see that the g.root-servers.net returned top-level servers for resolving the top-level domain. The top-level server h.gtld-servers.net responded and returned the authoritative ns1-39.azure-dns.com name server. This server hosted by Microsoft holds the DNS records for this domain. The CNAME record returned the alias learn-public.trafficmanager.net.

If I execute dig learn.microsoft.com (without +trace) we see the final destination. 23.222.52.128.

dig learn.microsoft.com

; <<>> DiG 9.16.1-Ubuntu <<>> learn.microsoft.com

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 15903

;; flags: qr rd ad; QUERY: 1, ANSWER: 5, AUTHORITY: 0, ADDITIONAL: 0

;; WARNING: recursion requested but not available

;; QUESTION SECTION:

;learn.microsoft.com. IN A

;; ANSWER SECTION:

learn.microsoft.com. 0 IN CNAME learn-public.trafficmanager.net.

learn-public.trafficmanager.net. 0 IN CNAME learn.microsoft.com.edgekey.net.

learn.microsoft.com.edgekey.net. 0 IN CNAME learn.microsoft.com.edgekey.net.globalredir.akadns.net.

learn.microsoft.com.edgekey.net.globalredir.akadns.net. 0 IN CNAME e13636.dscb.akamaiedge.net.

e13636.dscb.akamaiedge.net. 0 IN A 23.222.53.128

;; Query time: 20 msec

;; SERVER: 172.25.128.1#53(172.25.128.1)

;; WHEN: Thu Feb 02 14:10:40 CET 2023

;; MSG SIZE rcvd: 412

Internal DNS server

When designing your network, using human-readable names for your VM or other network resources is more accessible than IP addresses. A VM inside the network can communicate with another VM using a domain name. The DNS server inside the network is responsible for resolving these domain names. The virtual IP address 168.63.129.16 is used by resources inside the VNET to resolve domain names. Microsoft owns this static IP address, and it's used in all regions.

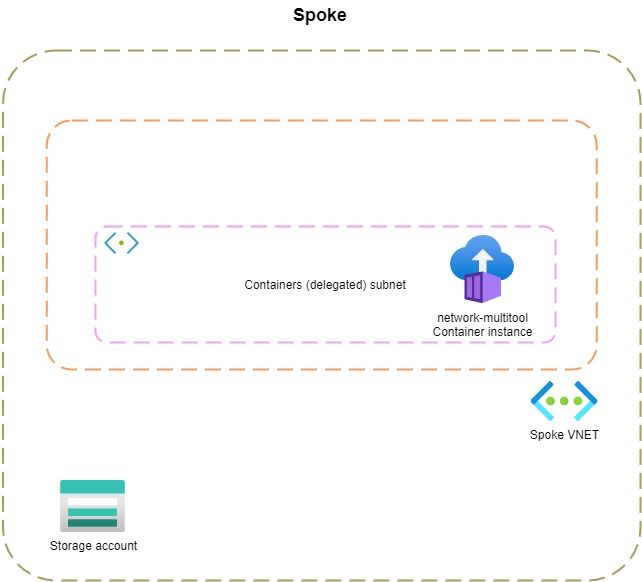

Let's see this in action. I created a simple VNET with a container instance that uses the network-multitool image. This image includes all kinds of networking utilities. I also created a storage account. If I execute nslookup (from inside the container) for the blob storage endpoint, you'll see that it sends a request to 168.63.129.16.

bash-5.1# nslookup stdemoprivatednsresolver.blob.core.windows.net

Server: 168.63.129.16

Address: 168.63.129.16#53

Non-authoritative answer:

stdemoprivatednsresolver.blob.core.windows.net canonical name = blob.ams07prdstr06a.store.core.windows.net.

Name: blob.ams07prdstr06a.store.core.windows.net

Address: 20.150.74.100

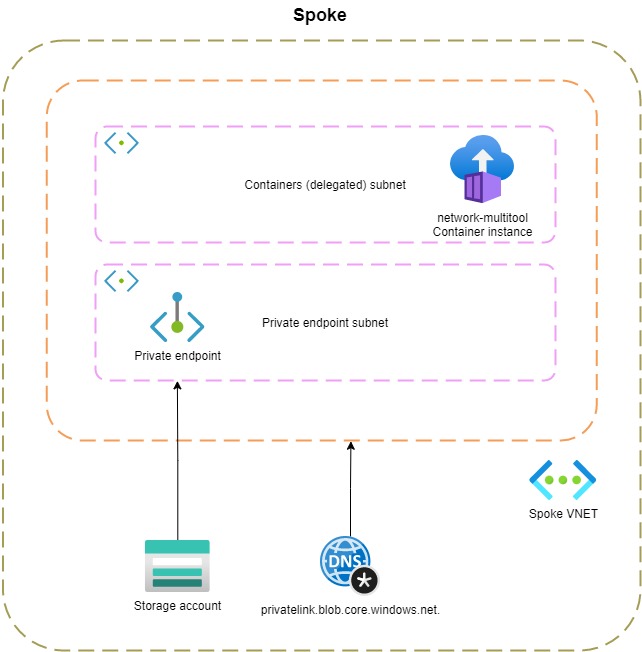

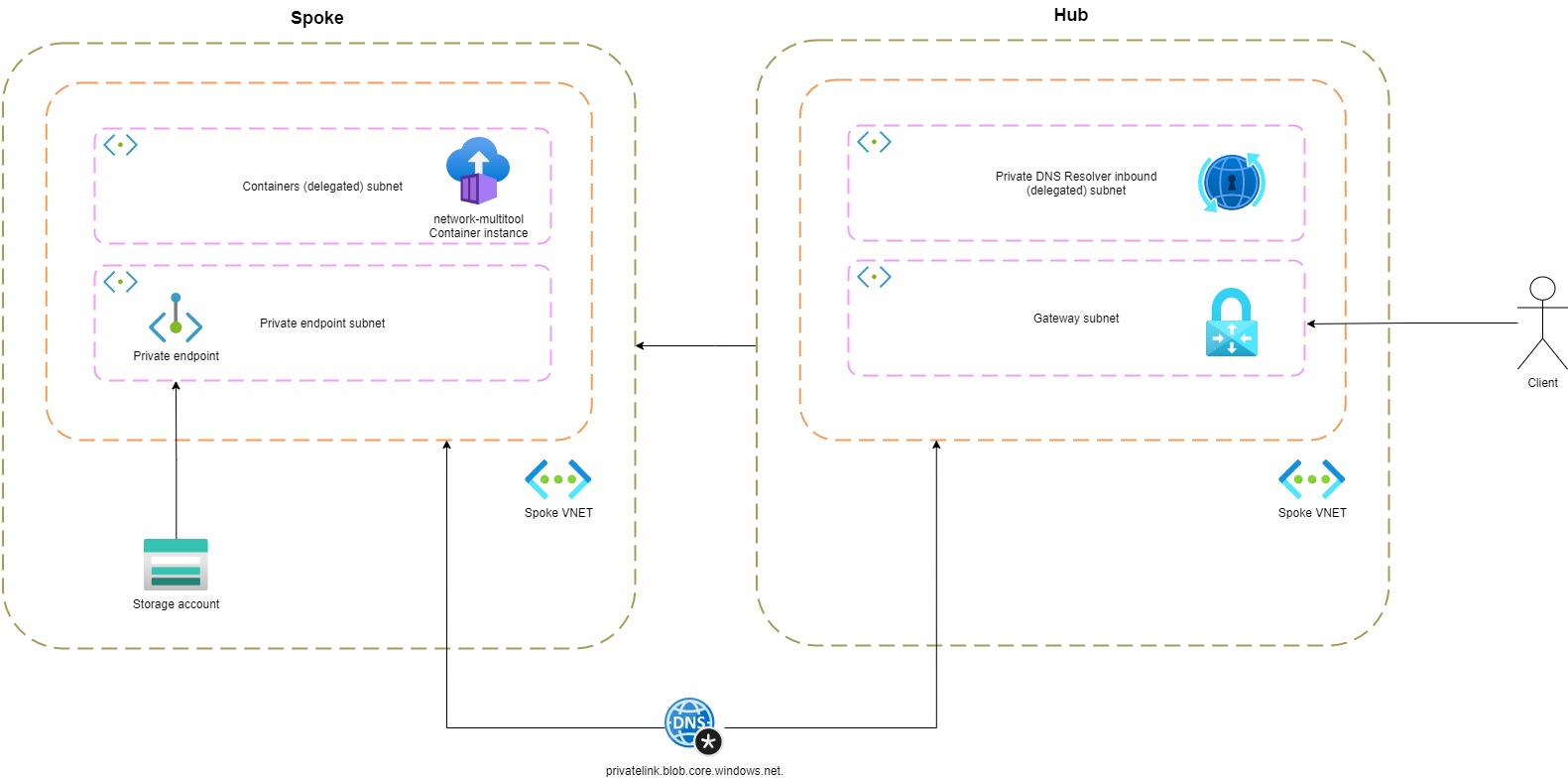

20.150.74.100 is a public IP address. This means that traffic flows out of the VNET over the internet. In most cases, we don't want this. Private Link is an Azure service that enables us to communicate with PaaS services over a private endpoint in our VNET. In the following diagram, you can see that I created a separate subnet for private endpoints in my VNET. Let's execute the nslookup command once more.

bash-5.1# nslookup stdemoprivatednsresolver.blob.core.windows.net

Server: 168.63.129.16

Address: 168.63.129.16#53

Non-authoritative answer:

stdemoprivatednsresolver.blob.core.windows.net canonical name = stdemoprivatednsresolver.privatelink.blob.core.windows.net.

Name: stdemoprivatednsresolver.privatelink.blob.core.windows.net

Address: 10.1.0.4

The same virtual IP is still used to resolve the blob storage endpoint. This time you see an alias (CNAME) returned for the storage account privatelink.blob.core.windows.net. This domain name resolves in a private IP address allocated from my subnet. By using Private Link, the traffic from my container instance to the storage account doesn't leave the VNET.

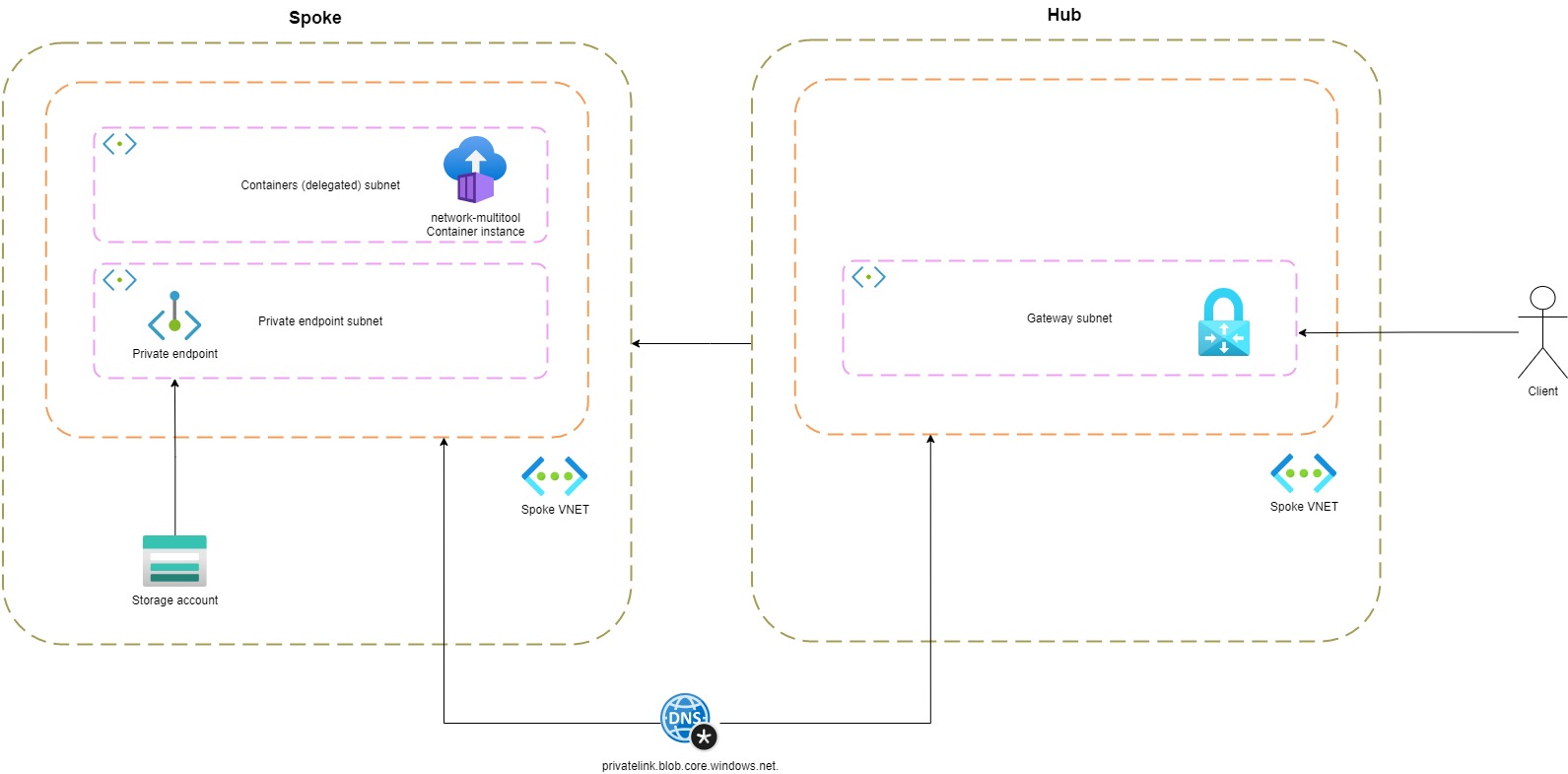

This is all working nicely, but let's introduce a point-to-site VPN.

When I'm connected to the VPN and execute nslookup from my local machine:

nslookup stdemoprivatednsresolver.blob.core.windows.net

Server: ns02.upclive.nl

Address: 213.46.228.196

Non-authoritative answer:

Name: blob.ams07prdstr06a.store.core.windows.net

Address: 20.150.74.100

Aliases: stdemoprivatednsresolver.blob.core.windows.net

stdemoprivatednsresolver.privatelink.blob.core.windows.net

A public IP is returned even though I'm connected to the VPN.

Private Link creates two resources a private endpoint and a private DNS zone. The private DNS zone holds an A record (stdemoprivatednsresolver = 10.1.0.4) to the private endpoint address. The private DNS zone (privatelink.blob.core.windows.net) is linked to the VNET. Each time a resource (inside the network) wants to resolve stdemoprivatednsresolver.blob.core.windows.net, the private endpoint address is returned. However, this is not the case for clients connected to VPN.

We need to have a DNS forwarder in place to solve this. First, let's take a look at a custom DNS forwarder.

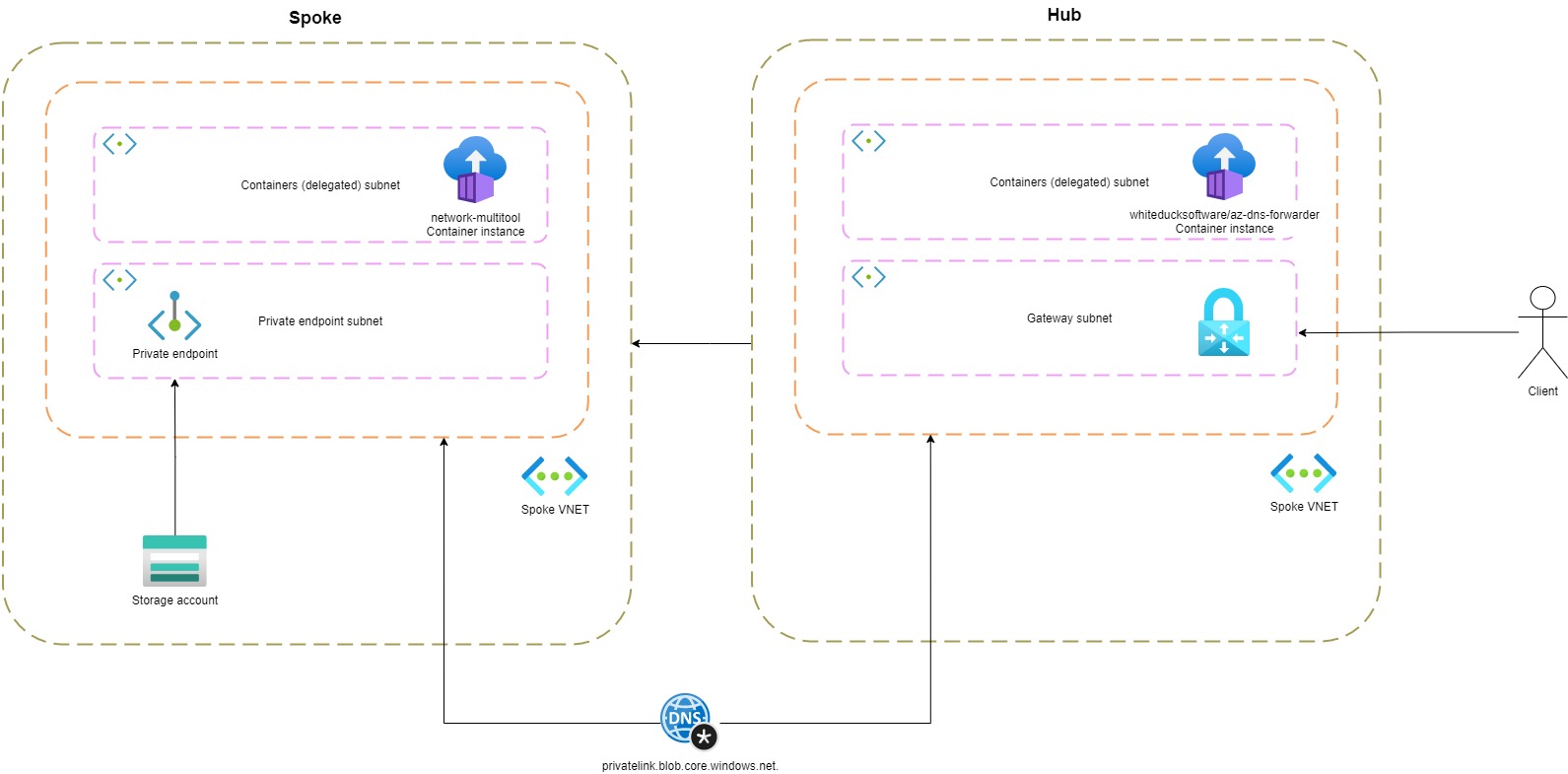

Custom DNS forwarder

There are many Docker images available that you can use for DNS forwarding. For this demonstration, I used an image called whiteducksoftware/az-dns-forwarder. I created a container instance in the hub VNET. The private DNS zone (created by Private Link) is linked to the hub VNET.

I configured the IP of the DNS forwarder (container instance) in my azurevpnconfig.xml.

<clientconfig i:nil="true">

<dnsservers>

<dnsserver>10.0.174.4</dnsserver>

</dnsservers>

</clientconfig>

If I execute nslookup again with the new DNS server (10.0.128.4) in place, you'll see I get back the private endpoint IP address.

nslookup stdemoprivatednsresolver.blob.core.windows.net

Server: UnKnown

Address: 10.0.128.4

Non-authoritative answer:

Name: stdemoprivatednsresolver.privatelink.blob.core.windows.net

Address: 10.1.0.4

Aliases: stdemoprivatednsresolver.blob.core.windows.net

Container instance running DNS forwarder image in Terraform

resource "azurerm_container_group" "cg_hub_dns" {

count = var.deploy_custom_dns ? 1 : 0

name = "ci-dns-forwarder"

location = azurerm_resource_group.rg.location

resource_group_name = azurerm_resource_group.rg.name

ip_address_type = "Private"

os_type = "Linux"

subnet_ids = [ azurerm_subnet.subnet_hub_containers.id ]

container {

name = "dns-forwarder"

image = "ghcr.io/whiteducksoftware/az-dns-forwarder/az-dns-forwarder:latest"

cpu = "1"

memory = "0.5"

ports {

port = 53

protocol = "UDP"

}

}

}

A custom DNS solution comes with downsides. You'll need to manage updates, scaling and other maintenance to the container yourself. Last year, Azure introduced a managed DNS service, Private DNS Resolver.

What is Private DNS Resolver?

The Private DNS Resolver is a fully managed DNS solution to resolve private DNS zones outside the VNET. The biggest advantage is that it's fully managed and eliminates the need for dedicated VMs or container instances. The Private DNS resolver can handle millions of requests. You'll need a delegated subnet for the inbound and (optionally) outbound endpoint to create this service.

The inbound endpoint is responsible for incoming DNS queries. It gets a private IP address from the delegated subnet. The subnet must have an address range between /24 and /28. Usually, you'll create this service in the hub network.

With the Private DNS Resolver in place, I executed nslookup again from my local machine. Below you can see that the Private DNS Resolver (10.0.192.4) resolves the private endpoint address.

nslookup stdemoprivatednsresolver.blob.core.windows.net 10.0.192.4

Server: UnKnown

Address: 10.0.192.4

Non-authoritative answer:

Name: stdemoprivatednsresolver.privatelink.blob.core.windows.net

Address: 10.1.0.4

Aliases: stdemoprivatednsresolver.blob.core.windows.net

Private DNS Resolver inbound endpoint in Terraform

resource "azurerm_private_dns_resolver" "hub_private_dns_resolver" {

count = var.deploy_private_dns_resolver ? 1 : 0

name = "pdr-resolver"

resource_group_name = azurerm_resource_group.rg.name

location = azurerm_resource_group.rg.location

virtual_network_id = azurerm_virtual_network.vnet_hub.id

}

resource "azurerm_private_dns_resolver_inbound_endpoint" "hub_private_dns_resolver_inbound" {

count = var.deploy_private_dns_resolver ? 1 : 0

name = "inbound-endpoint"

private_dns_resolver_id = azurerm_private_dns_resolver.hub_private_dns_resolver[0].id

location = azurerm_private_dns_resolver.hub_private_dns_resolver[0].location

ip_configurations {

private_ip_allocation_method = "Dynamic"

subnet_id = azurerm_subnet.subnet_private_dns_resolver_inbound.id

}

}

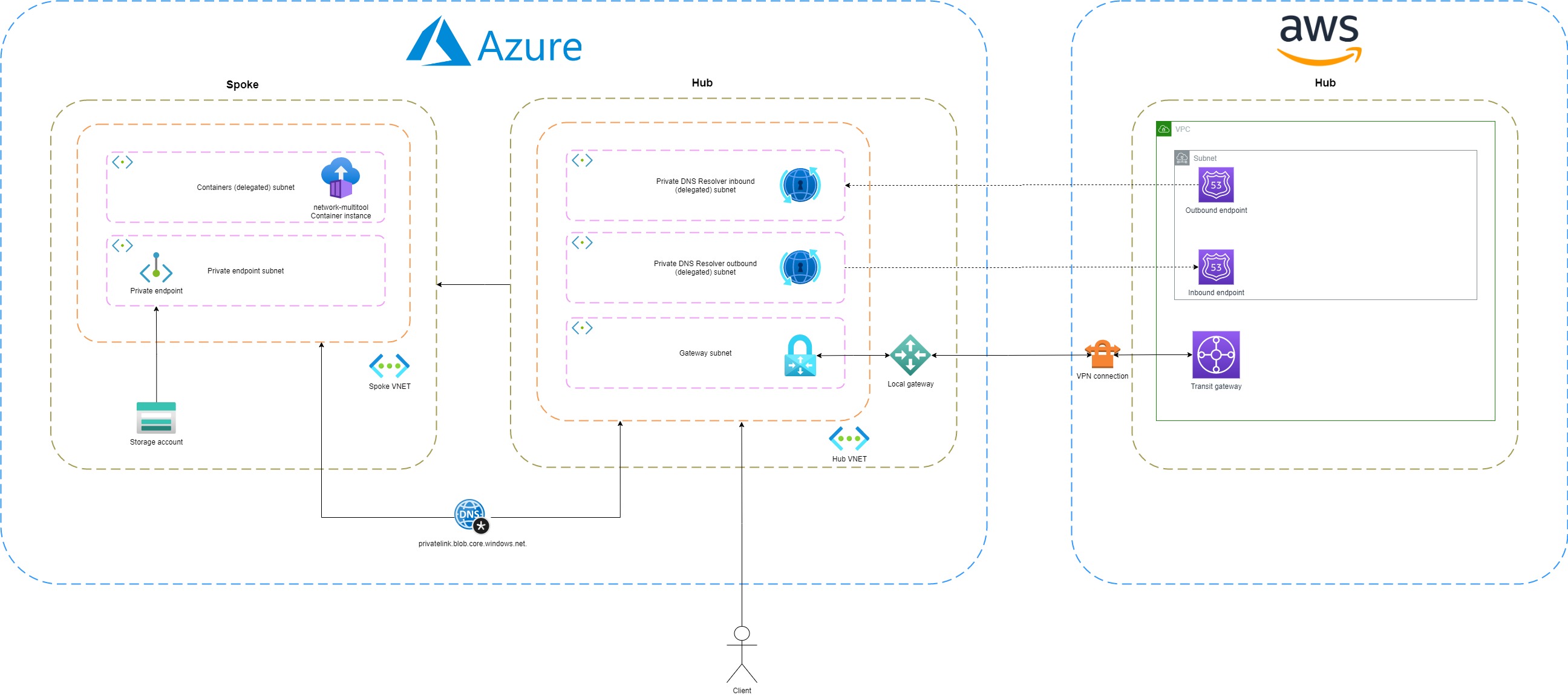

Multi-cloud with AWS Route53 Resolver and Azure Private DNS Resolver

To get network connectivity up and running in a multi-cloud environment we need to set up a site-to-site VPN. When this is in place, it's possible to route traffic from AWS VPC to Azure VNET over a secure line. As mentioned earlier, I recommend using domain names over IP addresses. To do this, we need to have a DNS solution in place. For example, an EC2 (VM) needs to connect to an Azure storage account. Private Link is already enabled to ensure traffic doesn't flow out of the network. However, the same problem occurs as with the point-to-site VPN connection. The endpoint resolves to a public IP address. To solve this a DNS server should forward the query to our Private DNS resolver. Fortunately, AWS and Azure offer similar solutions for this problem. AWS's Route53 Resolver is the equivalent of Azure's Private DNS Resolver. In Route53, we can configure an outbound endpoint that forwards all queries for the domain blob.core.windows.net. to the inbound endpoint of the Private DNS Resolver. Of course, we can also make it work vice versa.

Like the inbound endpoint, the outbound endpoint also needs a delegated subnet. You need to use an address range between /24 and /28. The outbound endpoint forwards DNS queries to another DNS server. A forwarding ruleset consists of rules, including a domain name and the IP address of the target DNS server. In my example, the target DNS server is the IP address of the inbound endpoint of the Route53 Resolver.

If a user connected to VPN wants to resolve a private hosted zone defined in Route53, then the request first travels to Azure's Private DNS Resolver inbound endpoint. A rule is configured for this domain name, so the request is forwarded to the Route53 Resolver. The Resolver returns an IP address.

Outbound endpoint in Terraform

resource "azurerm_private_dns_resolver_outbound_endpoint" "hub_private_dns_resolver_outbound" {

count = var.deploy_private_dns_resolver ? 1 : 0

name = "outbound-endpoint"

private_dns_resolver_id = azurerm_private_dns_resolver.hub_private_dns_resolver[0].id

location = azurerm_private_dns_resolver.hub_private_dns_resolver[0].location

subnet_id = azurerm_subnet.subnet_private_dns_resolver_outbound.id

}

resource "azurerm_private_dns_resolver_dns_forwarding_ruleset" "private_dns_resolver_forwarding_ruleset" {

count = var.deploy_private_dns_resolver ? 1 : 0

name = "pdr-resolver-aws-ruleset"

resource_group_name = azurerm_resource_group.rg.name

location = azurerm_resource_group.rg.location

private_dns_resolver_outbound_endpoint_ids = [azurerm_private_dns_resolver_outbound_endpoint.hub_private_dns_resolver_outbound[0].id]

}

resource "azurerm_private_dns_resolver_forwarding_rule" "private_dns_resolver_forwarding_rule" {

count = var.deploy_private_dns_resolver ? 1 : 0

name = "pdr-resolver-aws-artifacts-rule"

dns_forwarding_ruleset_id = azurerm_private_dns_resolver_dns_forwarding_ruleset.private_dns_resolver_forwarding_ruleset[0].id

domain_name = ""

enabled = true

target_dns_servers {

ip_address = "8.8.8.8"

port = 53

}

}

Summary

It's important to think about DNS when designing a network architecture. If you are using private DNS zones, you need a DNS forwarder to resolve these outside the network. The Private DNS Resolver is a managed DNS server. The main benefit is that it's fully managed, so you don't have to worry about updates or scaling issues. It's pretty simple to use, but remember it requires a delegated subnet for the inbound endpoint and one for the outbound endpoint.

You can find the full (Terraform) source code on my GitHub account.

Twitter

Twitter LinkedIn

LinkedIn GitHub

GitHub